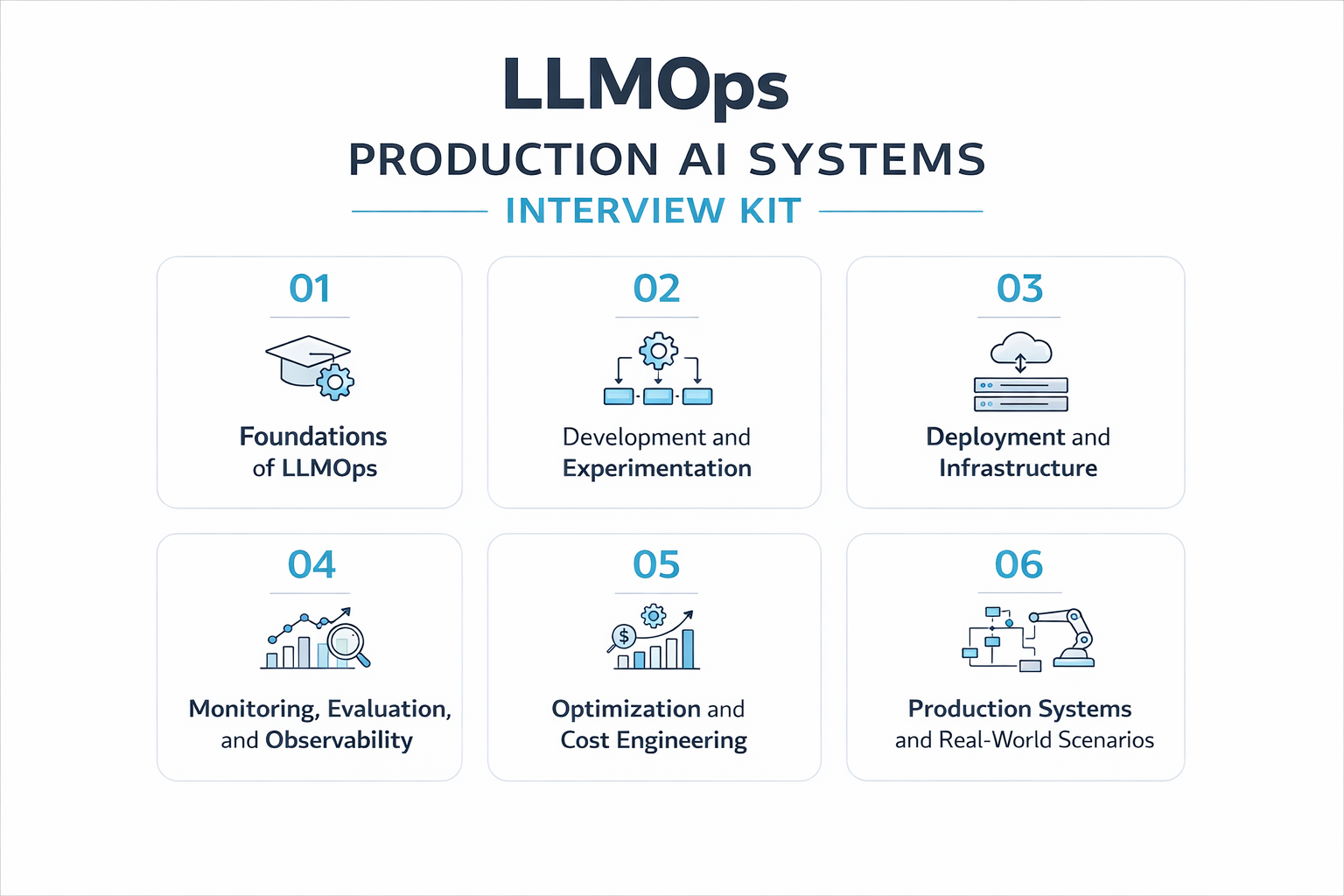

Category: Artificial Intelligence | MLOps | Production Systems Level: Intermediate to Advanced Volumes: 6 Total Questions: 220+

What Is This Course?

This course is a structured, interview-focused preparation kit for engineers and practitioners who work with or want to work with Large Language Models in production environments. It covers the complete LLMOps lifecycle from understanding how LLM systems are architected to deploying, monitoring, optimizing, and continuously improving them at scale.

Unlike generic AI courses, this kit is built around real interview questions asked at top tech companies, AI-native startups, and enterprise teams hiring for LLMOps, ML Engineering, and AI Infrastructure roles. Every question reflects what practitioners actually face on the job.

Who Is This Course For?

ML Engineers transitioning from classical MLOps into LLM-based systems who need to close the gap between traditional model pipelines and modern LLM production stacks.

Backend and Platform Engineers building AI-powered products who need to understand how LLM systems behave differently from standard software systems.

Data Scientists moving into production roles who want to understand deployment, monitoring, and cost engineering beyond model development.

AI/ML Architects designing LLM system architecture for enterprise applications who need a structured reference across all operational layers.

Job Seekers actively interviewing for LLMOps, AI Engineering, or Generative AI roles at companies where production LLM experience is a core requirement.

What Will You Learn?

By completing this course you will be able to confidently answer interview questions and solve real problems across six critical areas of production LLM systems.

Foundations covers how LLM systems differ from traditional ML systems, what the full LLM lifecycle looks like, and what operational challenges are unique to LLM applications.

Development and Experimentation covers how to engineer prompts at scale, select the right model, integrate retrieval, evaluate during development, and track experiments rigorously.

Deployment and Infrastructure covers how to deploy LLM systems to production using API-based, self-hosted, or hybrid patterns and how to optimize for latency, throughput, and security.

Monitoring, Evaluation and Observability covers how to measure output quality, detect hallucinations, trace failures, and build a reliable observability stack for LLM applications.

Optimization and Cost Engineering covers how to reduce inference cost, apply quantization, distill large models into smaller ones, and optimize prompts and RAG pipelines for production efficiency.

Production Systems and Real-World Scenarios covers how to design and operate production chatbots, enterprise RAG systems, agent pipelines, and multi-model routing systems under real-world constraints.

What Makes This Course Different?

Interview-first design. Every question in this kit reflects what is actually asked in LLMOps and AI Engineering interviews, not textbook theory.

Production-focused. The emphasis is on how systems behave under real traffic, with real costs, real failures, and real constraints, not just how models work in isolation.

Benchmark literacy included. A dedicated section covers 15+ industry benchmarks including MMLU, SWE-bench, GPQA, RAGAS, and LMSYS Arena so you can speak intelligently about model evaluation in any interview context.

Covers the full stack. From prompt templates to GPU memory management and from semantic caching to model distillation, this course does not leave gaps.

RAG and Agents treated correctly. Rather than deep-diving into RAG architecture or agent frameworks, this kit focuses specifically on how the LLM layer fits into those systems and what operational questions arise from that integration.

Course Structure

| Volume | Title | Focus |

|---|---|---|

| 1 | Foundations of LLMOps | Lifecycle, components, challenges |

| 2 | Development and Experimentation | Prompting, model selection, evals |

| 3 | Deployment and Infrastructure | Serving, scaling, latency, security |

| 4 | Monitoring, Evaluation and Observability | Quality metrics, tracing, debugging |

| 5 | Optimization and Cost Engineering | Quantization, distillation, cost control |

| 6 | Production Systems and Real-World Scenarios | End-to-end system design |

Prerequisites

Working knowledge of Python and REST APIs. Familiarity with basic machine learning concepts. Some exposure to LLM APIs such as OpenAI or similar. No deep research background required as this course is engineering and operations focused.

Reviews

There are no reviews yet.